Operationalizing Mandiant's Attack Lifecycle, the Kill Chain, Mitre's ATT&CK, and the Diamond Model with Practical Examples

From individual incident response to tracking adversaries across campaigns. Activity threading, analytic pivoting, and turning your own incidents into detection opportunities and structured threat intelligence.

When I was first getting started in cybersecurity, MITRE's ATT&CK had only just launched and wasn't widely known. I'd always thought the Diamond Model and Kill Chain were academic ways of thinking about attacks, not frameworks you could actually align operations to.

That changed over time. Working with mentors like Brandon Poole, being part of organizations with strong threat intelligence programs, earning the GCTI, and reading Intelligence-Driven Incident Response1 all shifted my understanding. I work with each framework actively now, nearly every day.

Something that really made them click for me was getting a visual understanding of how they fit together. I'm a very visual person, and once I could see the relationships mapped out, the operational value became obvious. So I thought I'd write a guide showing how you can apply them in practice.

I'm going to assume you're familiar with the basics of each framework individually. I'll cover them briefly as a refresher before getting into the real focus: how to layer the Diamond Model with ATT&CK and the Kill Chain into something you can actually use.

The Targeted Attack Lifecycle

Mandiant's Targeted Attack Lifecycle2 grew out of the same lineage as Lockheed Martin's Kill Chain, but it better reflects how intrusions actually unfold. The key difference is the loop. After an attacker establishes a foothold, the cycle of escalating privileges, internal reconnaissance, lateral movement, and maintaining presence repeats until the adversary completes their mission or gets caught. That loop matches what I see in real investigations.

When an alert fires, my first question is always "where am I in this cycle?" The answer isn't always obvious, but it narrows what to look for next, and I keep working through the loop, following the evidence until I can confirm or rule out a true positive. It's the framework I reach for during an active investigation.

For looking at an intrusion retroactively, start to finish, I actually prefer the classic Kill Chain, which is where we'll go next.

Scroll horizontally or rotate device for best viewing

Adapted from Mandiant — Targeted Attack Lifecycle, M-Trends Annual Threat Report

The Kill Chain & MITRE ATT&CK

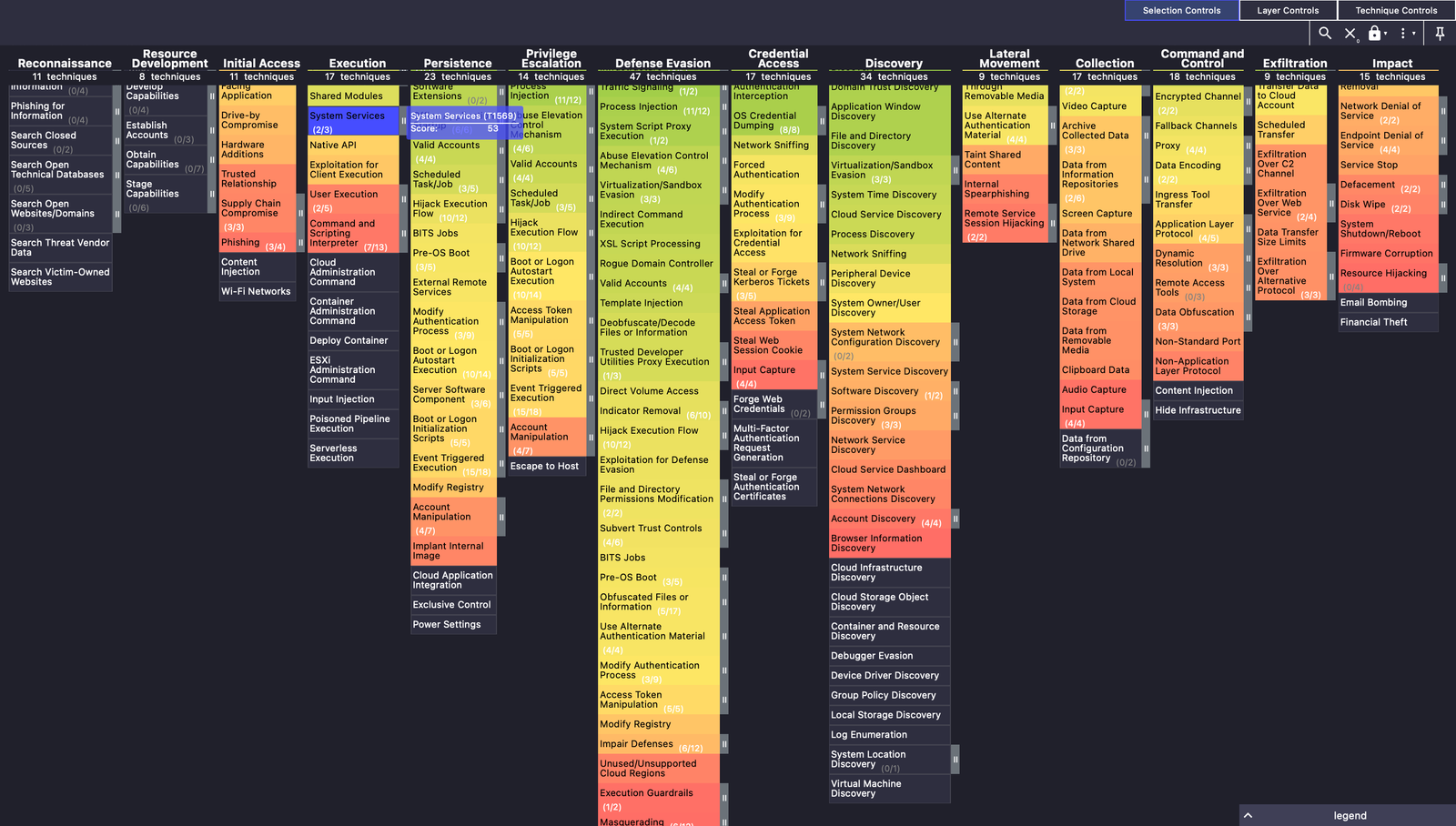

The Kill Chain and ATT&CK cover a lot of the same ground. ATT&CK's tactics map roughly to Kill Chain phases, and both describe the progression of an intrusion from initial access through to the adversary's end goal. Where they differ is in granularity and ways they can be used. Let's get a little more granular in both.

Scroll horizontally or rotate device for best viewing

Adapted from Hutchins, Cloppert & Amin — Intelligence-Driven Computer Network Defense (2011) · MITRE ATT&CK® Enterprise v16

The Kill Chain

Where the Attack Lifecycle is my go-to during an active investigation, the Cyber Kill Chain3 is what I reach for when reviewing an intrusion after the fact, end to end. Lockheed Martin's original seven phases (well, eight if you're in the government: Targeting, Reconnaissance, Weaponization, Delivery, Exploitation, Installation, Command & Control, Actions on Objectives) give you a clean linear structure for mapping out what happened and, more importantly, where you could have stopped it.

That said, the model has some fuzzy edges. The "Installation" phase has always bothered me. On a traditional malware intrusion it makes sense: something gets dropped to disk. But what about a Business Email Compromise? What exactly is being "installed"? My own mental model splits that phase into two concepts: action execution and persistence. Take a BEC scenario, an adversary finds credentials in a password breach. They weaponize that through automated password spraying. They exploit the lack of MFA, which executes the action of unauthorized access. Then they persist by adding a phone number they control as a second MFA factor, or by creating inbox forwarding rules. The C2 phase I think of more broadly as the "interaction phase," where some continued human or automated engagement happens after persistence is established, on-to action on objectives as normal. Not a perfect mapping, but it has fewer edge cases in practice.

Despite not being in vogue, the Kill Chain is my most-used framework as a security researcher writing detection. It's still the one I think every SOC should internalize, even if informally. Your own incidents are one of the best sources of threat intelligence you have, especially for building detection specific to your organization. Anything that makes it past the Delivery phase successfully is an intrusion, even if you contain it within seconds, and should be evaluated for additional detection opportunities.

Let's say for example a threat actor gets something past your defenses and you don't catch it until C2 beacons out, at which point your IPS blocks it. As a detection engineer, I always work my way back up and down the Kill Chain, looking for where else this could have been detected, and if it couldn't, I document the visibility gap.

A common misconception that's often repeated, even by well-regarded researchers is that you can't detect anything prior to the delivery phase. That's wrong.

Recon can in some instances be detected, however in most cases it's invisible, or the level of effort to operationalize it is not worth the value. For example, deconflicting scanning activity at your firewall's edge with a service like GreyNoise can identify an adversary scanning your organization specifically for vulnerabilities prior to an attack. Have any other devices in your infra already called out to the IP in question, or to similar hosting infra such as the same ASN? If so, was the activity legitimate?

At the Weaponization phase, was the domain some variation of your brand name? A letter swapped, an l replaced with an I? Brand reputation services can monitor for lookalike domains, and if this one slipped through, maybe there is something that can be pulled from it to catch something similar the next time.

Moving to Delivery, is there a YARA rule that could have been added to the email security gateway or NDR platform that could have caught the payload in flight?

At the Exploitation/Installation phase: why didn't the endpoint detect it? Was a rule overly tuned? An EDR agent in a degraded state? Or was this a genuinely novel technique you hadn't seen before?

For the C2 phase though you caught the beacon, are there overlapping indicators you missed? Maybe the IPS flagged an unusual TLD, but there's also a distinct URI pattern you can see that would make a great new NDR rule.

Why was the persistence not caught, and was there an opportunity? For the Action on Objectives, was there something you could have caught such as the file extension from the ransomware?

This kind of retroactive kill chain walkthrough turns a single incident into multiple detection opportunities.

MITRE ATT&CK

People often say they want to "align" to the MITRE ATT&CK framework4, but what does that actually mean? Are you just tagging your detection rules with technique IDs without really understanding why?

ATT&CK is, at its core, a more granular version of the Kill Chain. What it gave the industry is a common language for describing adversary behaviors at each stage of an intrusion. That shared vocabulary has had a measurable effect on the industry's ability to communicate about threats and detection coverage across teams, vendors, and organizations.

Most people think of ATT&CK in terms of detection. Mapping rules to techniques, identifying coverage gaps, building heat maps. That detection heat map matters, but there's a second, equally important heat map that doesn't get enough attention: the TTPs actually being used against your organization.

Does your detection coverage align with the behaviors you're actually seeing in your incidents? If not, you may be prioritizing threats that aren't targeting you while leaving gaps in the areas that are. ATT&CK is imperfect and has gaps. It's a framework, not scripture. As long as you understand how to apply it, you can adjust its use to fit your organization, or extend it as you see necessary.

What's less commonly known is how ATT&CK fits into the Diamond Model, specifically on the Capability facet. We'll get into that in the next section.

The Diamond Model

The Diamond Model of Intrusion Analysis5 is primarily used for attribution. Attribution is important, and anyone who tells you otherwise is actively harming your detection program. That said, even large companies with their own threat intel largely don't do attribution publicly, and often determine it through collaboration with one or more of their security vendors. Your organization probably doesn't need to attribute to the level of figuring out if the threat actor targeting you is Volt Typhoon. But identifying and clustering your own intrusion data is valuable on its own.

Four facets form the vertices of a diamond: Adversary, Infrastructure, Capability, and Victim. Every intrusion event maps onto these four points, with edges representing the relationships between them. An adversary employs capabilities, delivered through infrastructure, against a victim.

Scroll horizontally or rotate device for best viewing

Adapted from Caltagirone, Pendergast & Betz — The Diamond Model of Intrusion Analysis (2013)

This is where ATT&CK connects directly. The Capability facet isn't just "they used malware." It's T1566.001, Spearphishing Attachment. T1059.001, PowerShell. T1071.001, Web Protocols for C2. ATT&CK gives you a structured way to populate the Capability facet with specific technique IDs instead of loose descriptions. If you're already tagging your detection rules and mapping incidents to ATT&CK, you're building the Capability facet without realizing it. Keep in mind the ATT&CK framework isn't all encompassing and you shouldn't limit yourself to it.

But describing a single event isn't where the Diamond Model's value is. The real value shows up when you lay multiple events next to each other. Every facet becomes a pivot point. Same C2 infrastructure across two incidents? Possibly the same adversary. Same capability set targeting different victims in the same vertical? Worth investigating whether those are related campaigns. Same adversary, new infrastructure, different techniques? They're retooling, and you're watching it happen in structured data rather than gut feel.

Whether you call a cluster "intrusion cluster 1" or manage to figure out the industry name (if there is one), the result is the same. You can prioritize detection content based on what threat actors likely target your vertical, and if you determine the same actor is behind most of your intrusions, you can actively add friction to force them to change their TTPs. Maybe they always use a certain ASN or set of ASNs you don't have legitimate traffic to, and blocking those forces them to move infrastructure. Maybe they rely on low-quality TLDs you can scrutinize further or block outright. Each forced change makes your organization harder to target than the next. Remember, you don't have to be faster than the bear, you just have to be faster than everyone else.

Now consider what happens when you combine this with the Kill Chain. Each phase of the Kill Chain can be modeled as its own Diamond event, with all four facets populated. String those events together across an intrusion and you get an activity thread. Compare that thread against threads from other intrusions, and overlapping facets start to surface. That's how you move from responding to individual incidents to identifying the same adversary across campaigns, even after they've burned their infrastructure and swapped their tooling.

Putting It Together

Take the Diamond Model diagram above. That single diamond represents one event in an intrusion. An adversary (APT29) used specific capabilities (T1566.001 Spearphishing, T1059.001 PowerShell, T1071.001 Web Protocols) delivered through infrastructure (a compromised WordPress server at 198.51.100[.]47) against a victim (defense contractor, Windows 11 endpoints). One event, four facets, three ATT&CK technique IDs on the Capability vertex.

Now imagine a diamond for every phase of the Kill Chain. Reconnaissance gets its own event. Delivery gets one. Exploitation, persistence, C2, actions on objectives. Each has its own four facets populated with whatever evidence you have. String them together and you get what Caltagirone, Pendergast, and Betz call an activity thread: a sequence of Diamond events that maps the full progression of a single intrusion.

Scroll horizontally or rotate device for best viewing

Adapted from Figure 6, "The Diamond Model of Intrusion Analysis" — Caltagirone, Pendergast, Betz (2013)

Where it gets interesting is comparing threads. Run the same process on your next intrusion. If the C2 infrastructure overlaps, if the capability set shares ATT&CK techniques or the same malware family, those shared facets are pivot points. When enough facets overlap across separate intrusions, you're likely looking at an activity group: a cluster of related events that traces back to the same adversary or campaign, even after they've changed individual indicators between intrusions. It's important to remember: you should treat all detections as separate intrusions until you have evidence to show a detection is related to the rest of the activity in some way. It's not uncommon for an organization to find out they had been breached by another stealthier threat actor, only to be caught because the following intrusion triggered an in-depth investigation.

I've given you the 30,000-foot view. The original paper5 goes into significantly more depth on formalizing analytic pivots, handling partial attribution when you only have two or three facets populated, and how activity groups evolve as new data comes in. If this sounds operationally relevant to your program, it's well worth the read.

Closing

I said at the start that I used to think these were academic ways of thinking about attacks. They're not. Used together, they give you a structured way to learn from every intrusion your organization faces and use what you learn to get ahead of the next one. That's the difference between a reactive security program and a proactive one. Not more tools, not more headcount. Better use of the data you're already collecting.

- Brown, Rebekah, and Scott J. Roberts. Intelligence-Driven Incident Response: Outwitting the Adversary. 2nd ed., O'Reilly Media, 2023. oreilly.com ↩

- Mandiant. "Targeted Attack Life Cycle." Google Cloud Security Resources. Accessed March 12, 2026. https://cloud.google.com/security/resources/insights/targeted-attack-lifecycle ↩

- Hutchins, Eric M., Michael J. Cloppert, and Rohan M. Amin. "Intelligence-Driven Computer Network Defense Informed by Analysis of Adversary Campaigns and Intrusion Kill Chains." Lockheed Martin, 2011. lockheedmartin.com ↩

- MITRE. "ATT&CK Matrix for Enterprise." MITRE ATT&CK. Accessed March 12, 2026. https://attack.mitre.org/matrices/enterprise/ ↩

- Caltagirone, Sergio, Andrew Pendergast, and Christopher Betz. "The Diamond Model of Intrusion Analysis." Center for Cyber Intelligence Analysis and Threat Research, 2013. https://www.activeresponse.org/wp-content/uploads/2013/07/diamond.pdf ↩

Discussion

Subscribe to join the discussion.

Subscribe